How could AI agent governance failures inside Amazon Web Services (AWS) be prevented before they contribute to real outages?

That question carries weight in 2026 because cloud infrastructure is no longer just supporting software; it is powering autonomous AI systems that can act on production environments in seconds

The scale of cloud computing today makes governance failures far more consequential than they were even five years ago.

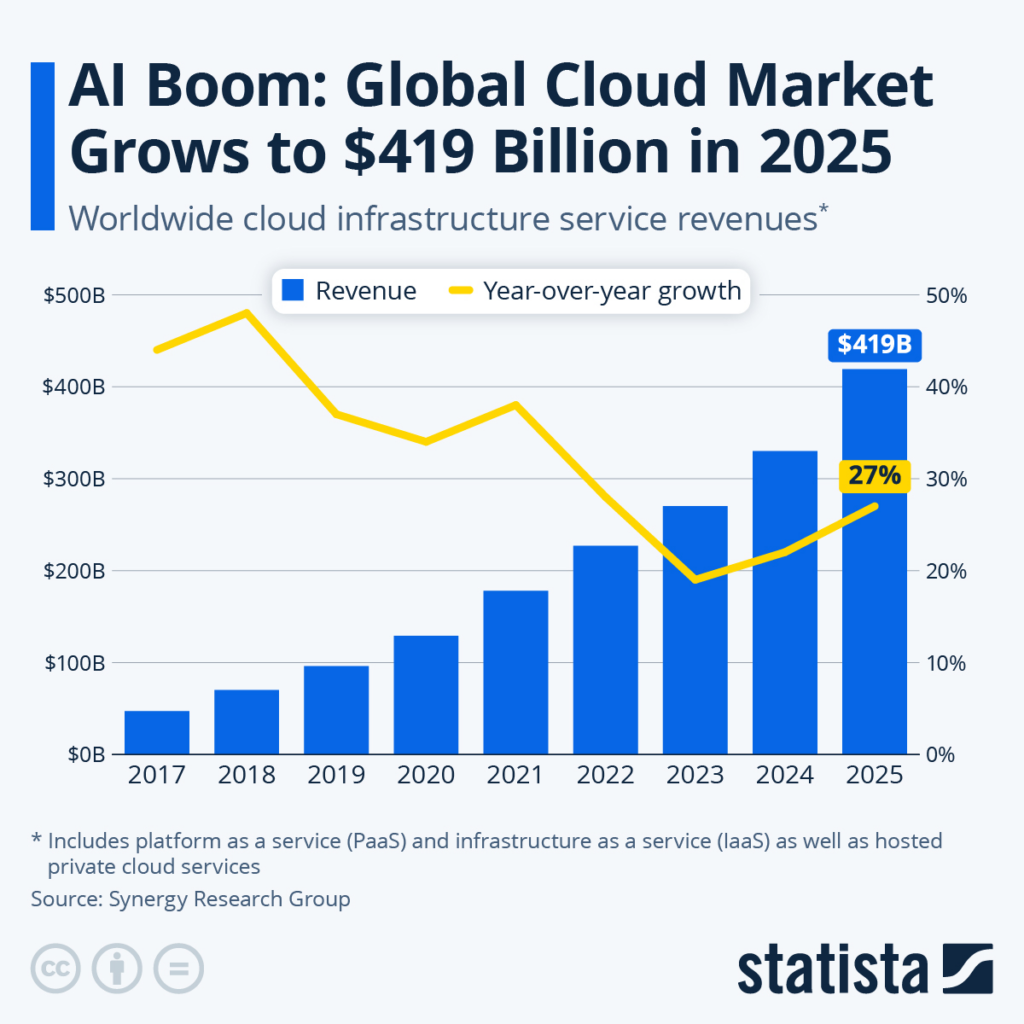

According to the research, global cloud infrastructure service revenues jumped by roughly $90 billion year over year to reach about $419 billion in 2025, an almost ninefold increase since 2017.

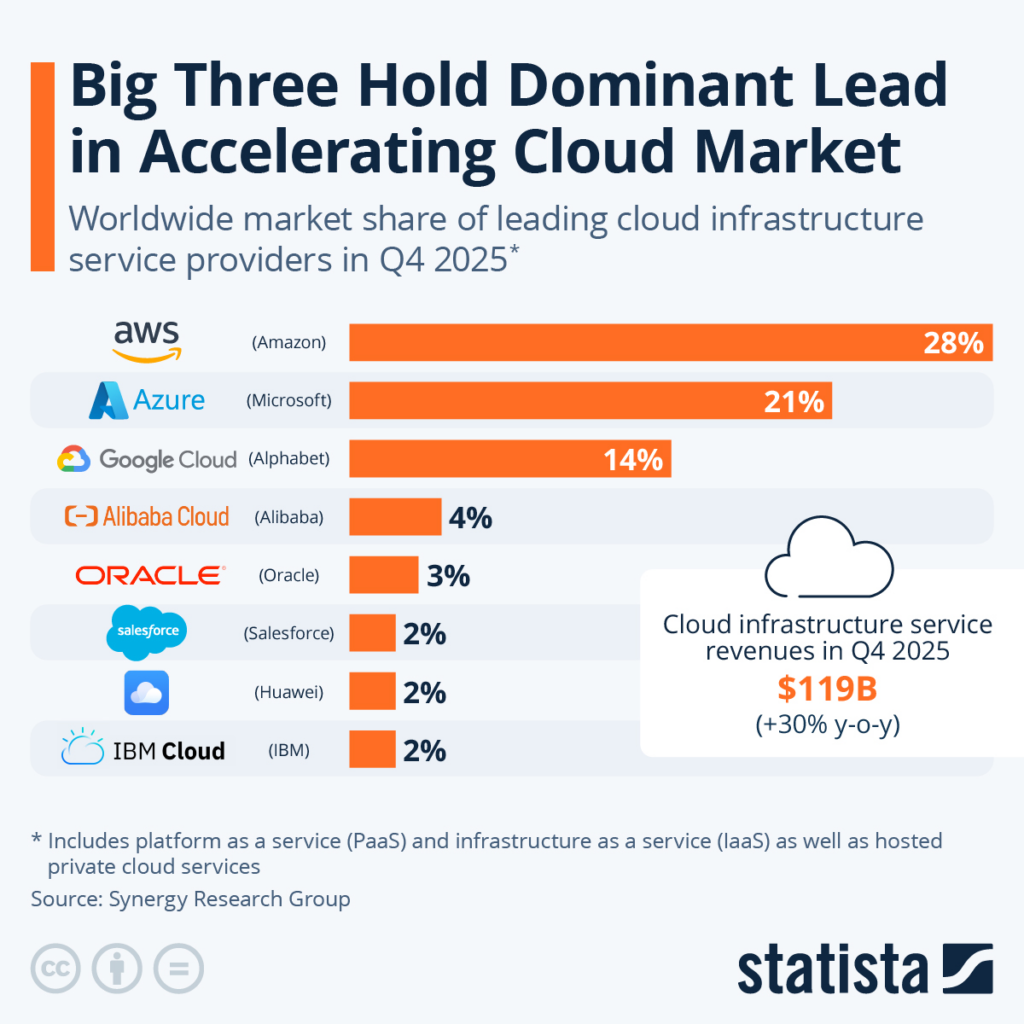

Within that $400+ billion market, Amazon Web Services remains the largest provider, accounting for around 28% of global cloud infrastructure revenue in the most recent quarter.

That translates to more than $100 billion in annual revenue, with AWS generating $45.6 billion in operating profit in 2025, nearly 60% of Amazon’s total operating profit. (Source)

At this scale, even a single misconfiguration involving an AI agent can ripple across thousands of enterprise workloads – especially when those changes are executed automatically.

AI coding agents are no longer limited to suggesting code; they execute deployment scripts, modify infrastructure configurations, and interact directly with Identity and Access Management (IAM) systems.

Without layered human‑in‑the‑loop controls, staged validation pipelines, and structured rollback safeguards, AI agent governance becomes a structural risk rather than a productivity advantage.

This blog examines how recent AWS incidents involving internal AI tools exposed governance gaps, what kind of governance architecture could have limited their blast radius, and why CodeConductor approaches AI agent governance differently by embedding human oversight into every stage of AI‑driven development.

So, without any further ado, let’s start!!!

In This Post

- How Did the AWS AI Agent Outages Expose Governance Failures?

- What Governance Architecture Could Have Prevented the AWS AI Agent Outages?

- How Does CodeConductor Provide Safer AI Agent Governance Than Autonomous Systems?

- What is the Enterprise Standard for Safe AI Agents in Cloud Infrastructure?

- The Infrastructure Wake-Up Call

- FAQs

How Did the AWS AI Agent Outages Expose Governance Failures?

The Amazon Web Services AI agent outages exposed a governance gap, not just a coding mistake.

The issue was not that artificial intelligence wrote bad code. The issue was that an AI coding agent had the authority to execute infrastructure-level changes without enforced human validation.

Inside modern cloud infrastructure, AI coding agents can do more than suggest improvements.

They can

- Trigger deployment pipelines,

- Modify configuration files,

- Interact with identity and access management systems, and

- Apply infrastructure-as-code changes directly to live environments.

When those actions occur inside an ecosystem the size of Amazon Web Services, the operational impact multiplies instantly.

At AWS scale, production environments are interconnected across regions, services, and customer workloads.

A misapplied configuration, an unintended environment reset, or a permissions oversight does not remain isolated. It propagates across dependent systems. Cloud-native architectures rely on automation for speed, but automation without layered oversight increases blast radius.

The outages revealed three structural governance weaknesses:

- Over-permissioned AI agents: AI systems were allowed to execute changes that affected live infrastructure rather than being restricted to sandbox or staging environments.

- Missing enforced human-in-the-loop checkpoints: There was no mandatory execution gate requiring human approval before destructive or high-impact changes were applied.

- Insufficient rollback containment: When automation triggered unintended changes, restoration depended on reactive correction rather than pre-configured, immutable state recovery.

In high-scale cloud ecosystems, governance must scale with automation. Execution authority should never be equal to suggestion authority. An AI agent that generates code should not automatically deploy it.

The AWS incident demonstrated that once autonomous systems cross the boundary from execution assistance, governance architecture becomes the primary risk control layer.

This distinction matters because AI agent governance is becoming foundational to cloud reliability.

The real lesson is not to avoid AI agents. It is to define

- Strict permission boundaries,

- Staged validation pipelines, and

- Mandatory human approval layers before autonomous systems operate in production.

If the outages exposed weaknesses in AI agent governance, the next logical question is clear:

What Governance Architecture Could Have Prevented the AWS AI Agent Outages?

The AWS outages were not caused by a lack of intelligence. They were caused by a lack of enforced execution controls. A resilient governance architecture separates AI generation, execution authority, and production impact into clearly defined control layers.

Here is what should have been structurally embedded before any AI coding agent was allowed to operate in live cloud environments.

1. Execution-Gated AI Agents

AI systems that generate infrastructure changes must not have automatic deployment authority. Code generation and infrastructure execution should operate in separate trust zones.

A proper governance model enforces:

- AI can propose changes

- CI/CD systems validate those changes

- A human explicitly authorizes production deployment

In high-scale environments like Amazon Web Services, this separation prevents AI-generated misconfigurations from instantly affecting customer workloads.

2. Environment-Level Containment Policies

Every AI-triggered change should be forced through sequential containment layers:

- Sandbox simulation

- Staging validation

- Controlled production rollout

Production should never be the first exposure point. Containment reduces blast radius and provides measurable validation before live impact.

3. Permission Minimization by Default

AI agents should operate under restrictive, scoped permissions. Destructive actions such as environment resets, mass configuration changes, or IAM modifications must require temporary, auditable privilege elevation.

Role-based access control must enforce:

- Task-specific privileges

- Time-bound elevation

- Automatic privilege revocation

Without this structure, automation inherits excessive authority.

4. Immutable State Snapshots Before Deployment

Before any infrastructure modification, automated snapshotting should preserve the previous system state.

This allows:

- Instant rollback

- Minimal downtime

- Deterministic restoration

Rollback should be automatic, not reactive. If system behavior deviates beyond predefined thresholds, restoration triggers immediately.

5. Continuous AI Action Logging and Audit Traceability

Every AI-executed action must generate:

- A timestamped execution record

- A linked approval record

- A change-diff summary

- A rollback checkpoint reference

Auditability is not just for compliance. It strengthens operational accountability and accelerates incident response.

6. Deployment Velocity Controls

AI systems increase speed. Governance must moderate that speed.

Production pipelines should enforce:

- Rate limits on infrastructure changes

- Progressive traffic shifting

- Canary deployments

- Automated anomaly detection before full rollout

Automation without pacing controls amplifies systemic risk.

A cloud ecosystem generating more than $400 billion annually cannot rely on reactive correction. Governance must be built into deployment architecture, not layered on after failure.

The question is no longer whether AI agents should assist engineering teams. The question is how execution authority is structured before those agents are trusted with live infrastructure.

How Does CodeConductor Provide Safer AI Agent Governance Than Autonomous Systems?

If governance architecture is the missing layer in autonomous AI execution, then the real differentiator is not how powerful an AI agent is — it is how controlled that agent is.

CodeConductor is designed around structured AI agent governance, not autonomous execution freedom. The platform does not treat AI as an unsupervised operator. Instead, it embeds human oversight, permission boundaries, and deployment controls directly into the development lifecycle.

Here is how that approach differs structurally from fully autonomous systems.

1. AI-Assisted Generation, Not AI-Automated Deployment

In fully autonomous environments, AI agents can move from suggestion to execution without enforced separation. CodeConductor maintains a structural boundary between:

- Code generation

- Infrastructure configuration

- Deployment approval

AI can propose full-stack application structures, APIs, and database logic. But execution remains gated through structured workflows. This prevents silent propagation of unintended infrastructure changes.

The core difference is architectural: execution authority is never assumed.

2. Embedded Human-in-the-Loop Controls

Human validation is not optional or post-incident. It is embedded before deployment.

Deployment checkpoints require:

- Review of generated changes

- Confirmation before production rollout

- Clear accountability mapping

This ensures that AI acceleration does not override operational judgment. Human-in-the-loop systems are particularly critical in cloud infrastructure environments where a single misconfiguration can affect scaling, billing, security posture, or uptime.

3. Environment Segmentation by Design

Rather than allowing AI agents to interact directly with production systems, controlled platforms isolate:

- Development environments

- Staging environments

- Production environments

This containment-first approach reduces blast radius. Even if AI-generated logic requires revision, exposure remains limited until validated.

4. Permission-Scoped Execution

Instead of granting broad infrastructure privileges, execution operates under constrained roles.

Governance principles include:

- Role-based access segmentation

- Scoped execution rights

- Clear visibility into deployment impact

This reduces the risk of unintended IAM or configuration changes affecting unrelated services.

5. Transparent Code Ownership and Inspectability

Autonomous AI systems can create black-box risk. If infrastructure changes are difficult to inspect before deployment, governance weakens.

CodeConductor maintains full code transparency. Teams can review, modify, and audit generated output before execution. This reinforces responsible automation rather than blind acceleration.

The Structural Difference

The difference between autonomous AI and governed AI is not capability — it is control architecture.

In large-scale ecosystems like Amazon Web Services, AI without execution boundaries increases systemic fragility. Platforms built around AI agent governance reduce that fragility by enforcing structured approval, containment, and accountability.

The future of AI in DevOps is not about removing humans from the loop. It is about designing systems where AI amplifies productivity while governance protects infrastructure stability.

If structured AI governance is possible at the platform level, the broader question becomes larger than any single provider. What does responsible AI control look like at the enterprise standard, especially as autonomous systems become embedded across cloud infrastructure?

What is the Enterprise Standard for Safe AI Agents in Cloud Infrastructure?

Preventing outages is an engineering objective. Preventing systemic AI risk is an organizational discipline.

The next stage in AI evolution is not about adding more safeguards to deployment pipelines. It is about defining how enterprises govern autonomous decision-making across technology, security, finance, and executive leadership.

When companies operate inside ecosystems powered by platforms like Amazon Web Services, AI agents influence

- Revenue,

- Uptime,

- Customer Trust, and

- Regulatory Exposure.

Governance must therefore expand beyond DevOps controls and into executive accountability.

A mature enterprise AI governance model includes five distinct layers.

1. Board-Level AI Oversight

AI infrastructure risk should be visible at the executive level.

Organizations leading in AI maturity establish:

- Formal AI risk review committees

- Quarterly reporting on AI system impact

- Defined accountability ownership

When AI agents influence production systems, they influence financial performance. Governance must reflect that reality.

2. AI Risk Classification Frameworks

Not all AI actions carry equal impact.

Enterprises should classify AI operations into tiers, such as:

- Advisory (no execution authority)

- Controlled execution (human-approved changes)

- Autonomous bounded execution (predefined safe domains)

This structured risk segmentation allows innovation without uncontrolled exposure.

3. Cross-Functional Governance Alignment

AI governance cannot live solely within engineering.

It requires coordination across:

- Security teams

- Compliance teams

- Legal counsel

- Finance leadership

Cloud-scale automation affects regulatory exposure, audit requirements, and contractual service guarantees. AI oversight must reflect cross-functional impact.

4. AI Operational Transparency Metrics

Enterprises should measure:

- Percentage of AI-generated production changes

- Mean time to detect AI-triggered anomalies

- Human override frequency

- Deployment rollback rates

Quantified governance enables continuous refinement rather than reactive correction.

5. Innovation-Risk Balance Strategy

The most advanced enterprises do not slow AI adoption. They formalize it.

They define:

- Acceptable automation velocity

- Defined experimentation boundaries

- Clear escalation paths

AI becomes a managed growth engine, not an uncontrolled accelerator.

Why This Model Matters Now?

Organizations that treat AI governance as a maturity journey gain operational resilience. However, companies that treat it as a feature risk systemic instability.

CodeConductor aligns with this enterprise maturity model by

- Embedding structured approval workflows,

- Clear execution boundaries, and

- Transparent oversight mechanisms should be integrated into the development lifecycle.

AI acceleration does not have to mean AI fragility.

The Infrastructure Wake-Up Call

The AWS outages were a warning, not an anomaly. As AI agents gain operational authority, governance must evolve from technical guardrails to enterprise-wide accountability.

If your organization is deploying AI agents in cloud infrastructure, the question is no longer whether controls are needed.

The question is whether your governance architecture is mature enough to scale with automation.

Because the future of AI in cloud infrastructure will belong to organizations that control it, not just those that deploy it.

FAQs

What Is AI Agent Governance in Cloud Infrastructure?

AI agent governance defines policies, permissions, and approval workflows that control how AI systems generate and execute infrastructure changes within cloud environments to prevent unauthorized deployment or operational disruption.

How Can Enterprises Audit AI-Generated Infrastructure Changes?

Enterprises audit AI-generated changes by logging execution records, linking approvals to deployments, tracking configuration diffs, and preserving rollback snapshots to ensure traceability, accountability, and compliance visibility.

What Compliance Risks Do Autonomous AI Agents Create?

Autonomous AI agents create compliance risk when unsupervised infrastructure changes violate security policies, data residency laws, or service-level agreements, increasing regulatory exposure and contractual liability.

How Does AI Agent Risk Differ From Traditional DevOps Risk?

Traditional DevOps risk stems from human error; AI agent risk stems from automated execution at scale, where speed and replication amplify misconfigurations across multiple cloud environments simultaneously.

What Metrics Should Organizations Track for AI Governance Readiness?

Organizations should track AI deployment approval rates, anomaly detection time, rollback frequency, privilege escalation logs, and percentage of AI-executed production changes to measure governance maturity.

Founder CodeConductor